Tractable and Expressive Generative Modeling with Probabilistic Flow Circuits

Neurosymbolic AI: Foundations and Applications, pages 183–222. Wiley Online Library, 2026

Research Agenda

I develop probabilistic AI systems that combine expressive generative modeling, tractable inference, and trustworthy reasoning. My work centers on probabilistic circuits and related generative models that represent uncertainty explicitly, answer probabilistic queries efficiently, and support reliable decisions under missing, noisy, or conflicting evidence.

Model

complex structured distributions

Infer

probabilistic queries efficiently

Evaluate

learning dynamics and reliability

Deploy

with robustness and human guidance

Research Map

The sections are connected by a single question: how can uncertainty-aware models become expressive enough to use, reliable enough to deploy, and structured enough to inspect?

Core Question

How can generative models capture complex data distributions while preserving useful probabilistic inference?

Approach

I develop probabilistic generative models that combine the semantics of tractable probabilistic circuits with the representation power of flows, geometry-aware routing, and learned representations.

Visual Model

Research Problems

Increase expressivity without silently breaking tractable inference.

Align neural transformations and local geometry with circuit factorization.

Learn probabilistic representations that remain useful when evidence is incomplete.

What This Enables

Models that can generate, score, marginalize, condition, and reason about missing or partial evidence in a single probabilistic framework.

Representative Papers

Selected work connected to this theme.

Neurosymbolic AI: Foundations and Applications, pages 183–222. Wiley Online Library, 2026

The 43rd International Conference on Machine Learning (ICML), 2026

The Eighth Workshop on Tractable Probabilistic Modeling (TPM), 2025

Core Question

How do generative models learn, converge, overfit, and generalize?

Approach

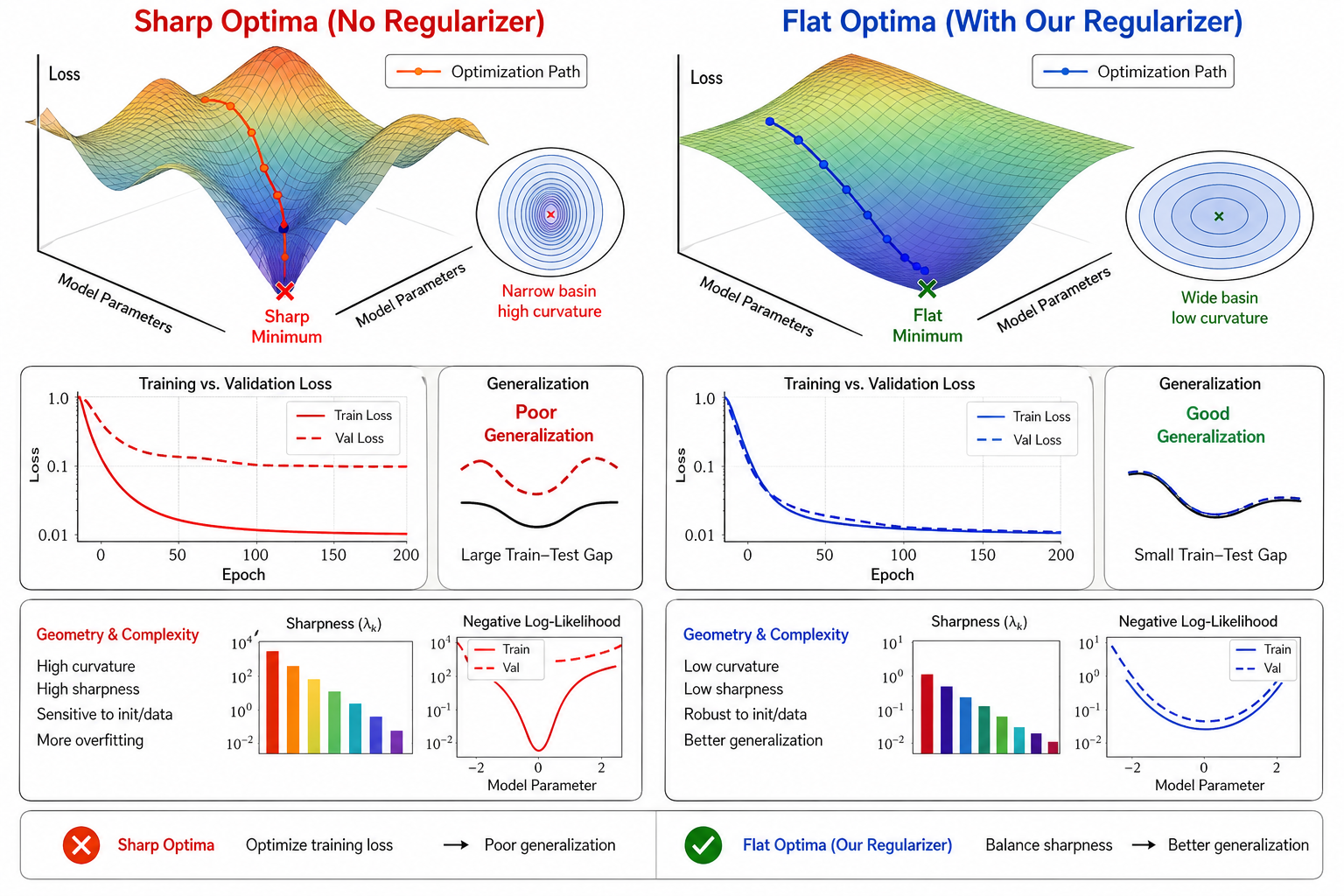

I study training behavior through measurable quantities such as duality gaps, curvature, sharpness, and tractable second-order structure, connecting adversarial training diagnostics with PC optimization.

Visual Model

Research Problems

Diagnose when adversarial generative training is converging or cycling.

Understand when expressive PCs overfit through sharp likelihood landscapes.

Use tractable model structure to design reliable generative learning objectives.

What This Enables

Training procedures whose behavior can be monitored, analyzed, and improved instead of treated as a black box.

Representative Papers

Selected work connected to this theme.

The 40th Annual AAAI Conference on Artificial Intelligence (AAAI), 2026

Proceedings of the 38th International Conference on Machine Learning (ICML), PMLR 139, 9660–9670, 2021

Core Question

How can AI systems remain reliable when evidence is limited, shifted, missing, noisy, or conflicting?

Approach

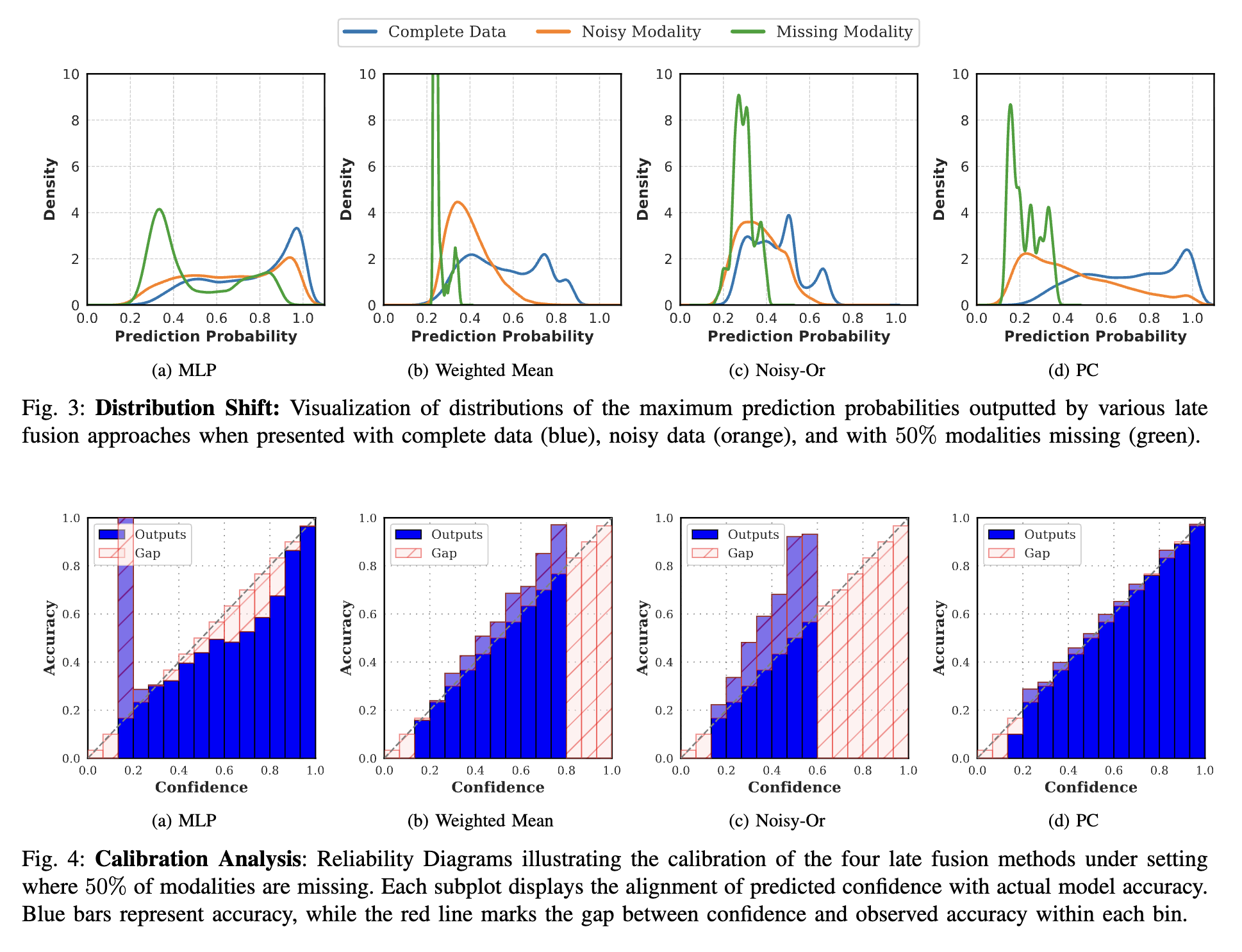

I design evaluation protocols and probabilistic models for deployment stressors: few-shot adaptation, task shift, missing modalities, corrupted sources, and conflicting information.

Visual Model

Robust multimodal fusion

Reliability analysis for late multimodal fusion with probabilistic circuits.

Research Problems

Stress-test adaptation when tasks differ from the training regime.

Model reliability under missing, corrupted, or contradictory modalities.

Expose deployment failures that average benchmark accuracy can hide.

What This Enables

Systems that know when adaptation helps, when evidence is unreliable, and how to make decisions under realistic uncertainty.

Representative Papers

Selected work connected to this theme.

The 29th International Conference on Information Fusion (FUSION), 2026

The 27th International Conference on Information Fusion (FUSION), 2024

SN Computer Science, 4(5), 539. Springer, 2023

Core Question

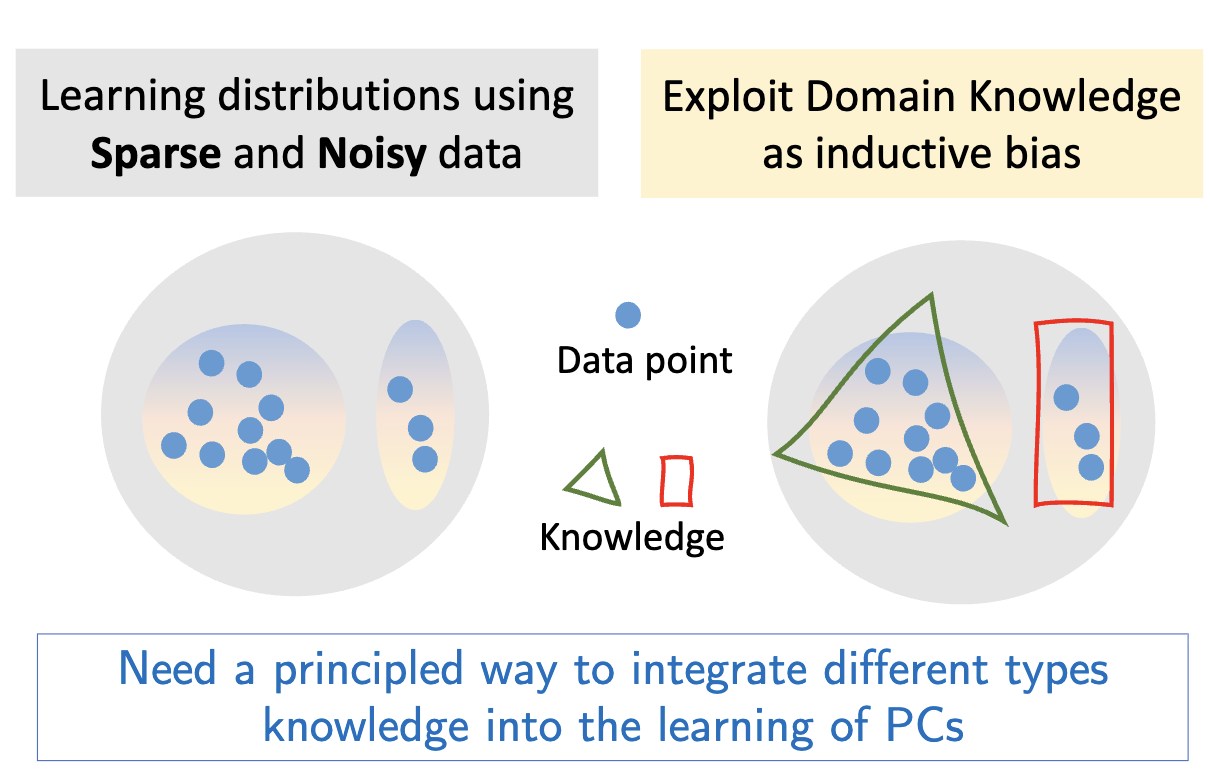

How can probabilistic models incorporate human guidance, domain knowledge, constraints, and credibility-aware reasoning?

Approach

I use tractable probabilistic inference as a substrate for constraint-aware learning, human feedback, knowledge-guided modeling, and auditable reasoning about source credibility.

Visual Model

Human-allied probabilistic circuits

A unified interface for incorporating feedback and constraints into PC learning.

Research Problems

Express domain knowledge as probabilistic constraints during learning.

Support human feedback without losing calibrated uncertainty.

Use inference queries to make credibility and reasoning inspectable.

What This Enables

AI systems that collaborate with human expertise, respect structured knowledge, and expose the probabilistic assumptions behind their decisions.

Representative Papers

Selected work connected to this theme.

The Twelfth Annual Conference on Advances in Cognitive Systems (ACS), 2025

The 29th Pacific-Asia Conference on Knowledge Discovery and Data Mining (PAKDD), 2025

The 39th Annual AAAI Conference on Artificial Intelligence (AAAI), 2025

Cross-Cutting Methods

Common tools and evaluation lenses that connect the research program.